EgoSchema × Action100M Viewer

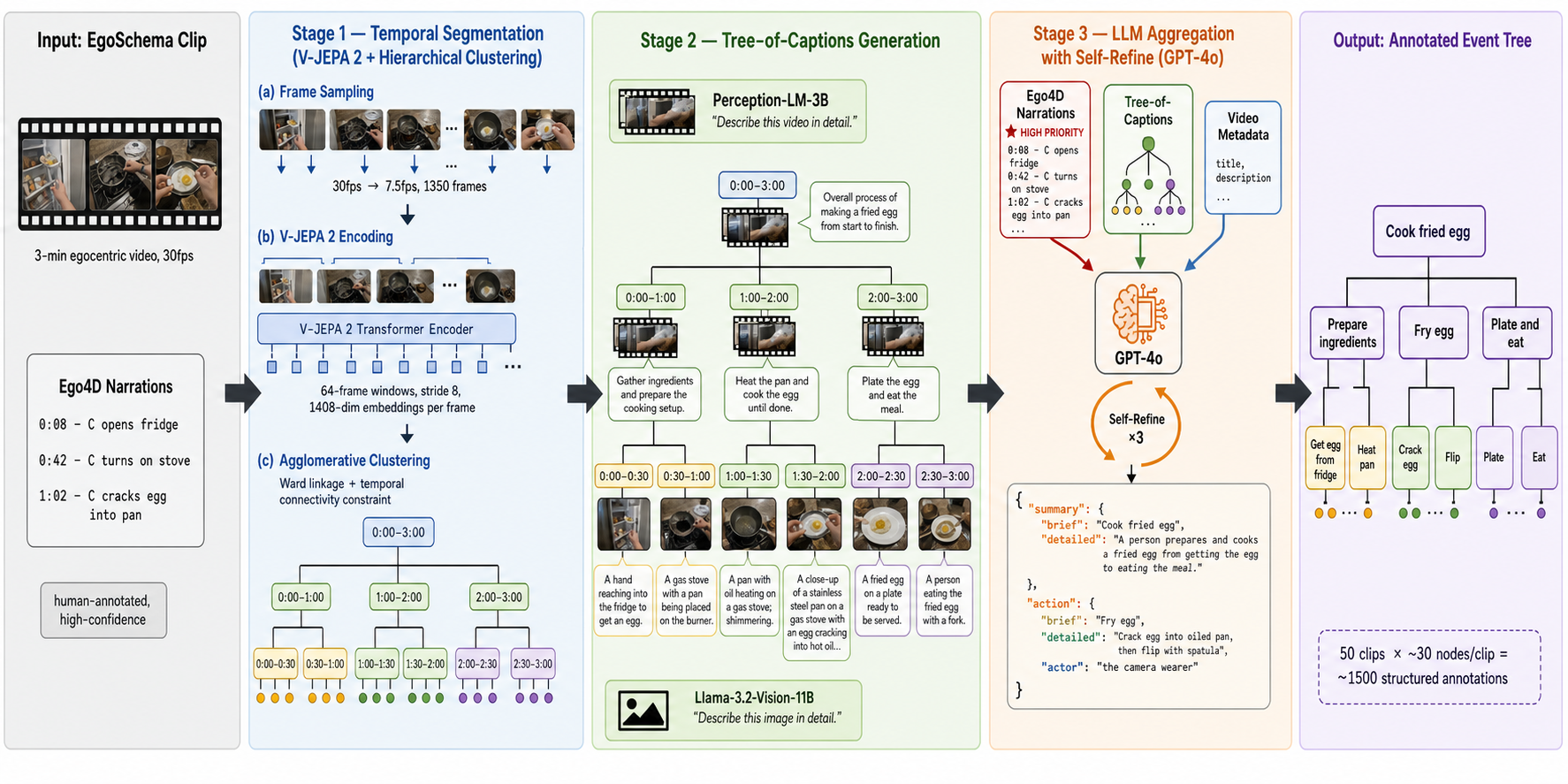

Hierarchical dense action annotation pipeline (Chen et al., 2026 — Action100M) ported to 50 EgoSchema 3-minute egocentric clips. Each clip is segmented by V-JEPA 2 + Ward agglomerative clustering, captioned by Llama-3.2-Vision + Perception-LM, and aggregated by GPT-4o with 3-round Self-Refine. Click any card to open an interactive viewer with the source video, hierarchical timeline, and per-node annotations.

00faf954…

A person shapes mud into bricks using a wooden mold.

0d01c24b…

A person crafts a paper flower at a small table.

0f0d2135…

A woman packs groceries and interacts with a customer.

156683f3…

A person repairs a scooter in a workshop.

1d37d8e5…

A person cleans the kitchen and explores the house.

22a04ca6…

A man prepares scrambled eggs in a messy kitchen.

2b1ad004…

A woman plays cards while feeding a lizard.

2b960c7d…

A person exercises and makes coffee.

3581bcf8…

A person is crafting bricks using clay and sand.

3b50beeb…

A person cuts and peels dried fruits at a table.

420fa606…

Two men engage in card playing and note-taking at a table.

4785bf2e…

A person organizes groceries and cleans the kitchen.

4aa10456…

A person cleans and examines books on the floor.

4c3b9dd4…

A person prepares and cooks a dish.

52e48527…

A woman cuts yellow fabric with scissors.

5e43992d…

A person prepares and cooks a meal in a kitchen.

604acf21…

A man welds and smooths a metal pipe.

670945d6…

A person navigates a house, interacting with items and observing surroundings.

696392dd…

A person assembles a wooden project using glue and small blocks.

7b904a75…

A person controls a robot vacuum while another cleans the kitchen and someone else uses a phone.

7d5b057b…

A woman irons various fabrics on an ironing board.

7df39cbd…

A person is knitting at a table with various items.

8350d2b3…

A person organizes clothes by taking them out of a wardrobe and placing them on a bed.

84be1093…

A man paints a wooden door and board yellow.

8782618b…

A person washes dishes in a kitchen sink.

920d35a6…

A person cooks and prepares a meal in a cluttered kitchen.

974ac5d0…

A lab technician conducts experiments using pipettes and test tubes.

9c956866…

A person sews a small pouch using a sewing machine.

a1262146…

A person crafts a clay sculpture at a table.

a3a71268…

Two people play a game of checkers on a wooden table.

a88cabdc…

A person washes clothes in a bathtub while intermittently watching a video on their phone.

afa330df…

A person washes dishes at a kitchen sink.

b4c5f426…

A woman crafts clay pots on the ground.

c94ea4e2…

A person organizes and cleans books on the floor.

c9ed0ee8…

A person cuts and prepares cardboard for a project.

ca9659f7…

A person prepares a meal by adding milk and water to a pot and organizing kitchen items.

cc4ccc21…

A person cooks and cleans in the kitchen.

d092fae6…

A person photographs a field and interacts with a group.

d5ea4b32…

A person creates a craft project at a table.

dd3d5867…

A person is gardening by weeding and planting in a raised bed and pot.

e1e76763…

A person prepares a meal in a modern kitchen.

e2c54dce…

A man works on a woodworking project in a workshop.

e4f08b6f…

A person walks through a house, brushes their teeth, and exits the bathroom.

ea99b807…

A person repots a plant using a trowel and soil bag.

edb5c7f4…

A person folds a cloth and transfers items between bags.

f5066bbf…

A man sands and polishes a metal pipe using power tools.

f75ea23d…

Two people work together to complete a 1000-piece emoji jigsaw puzzle.

fa2363f4…

A person knits a purple item in a living room.

fb82f807…

A person prepares potatoes in the kitchen.

fee05c7c…

A person cleans windows in a room.

Pipeline overview

Stage 1. V-JEPA 2 ViT-g-384 frame embeddings (window=64, stride=8, res=384²) → temporal-contiguous Ward agglomerative clustering → ~600+ tree nodes per clip.

Stage 2. Leaf nodes captioned by Llama-3.2-11B-Vision on midpoint frame;

internal nodes captioned by Perception-LM-3B on 32 evenly-spaced frames at 320×320.

Stage 3. Nodes ≥4s aggregated by gpt-4o-2024-08-06

using global-tree-context + current-subtree-markdown, with 3-round Self-Refine and JSON-Schema-strict

structured outputs ({summary, action}).

Full method documentation:

README ·

Source code (private):

streaming_benchmark/src/{stage1,stage2,stage3}_*.py